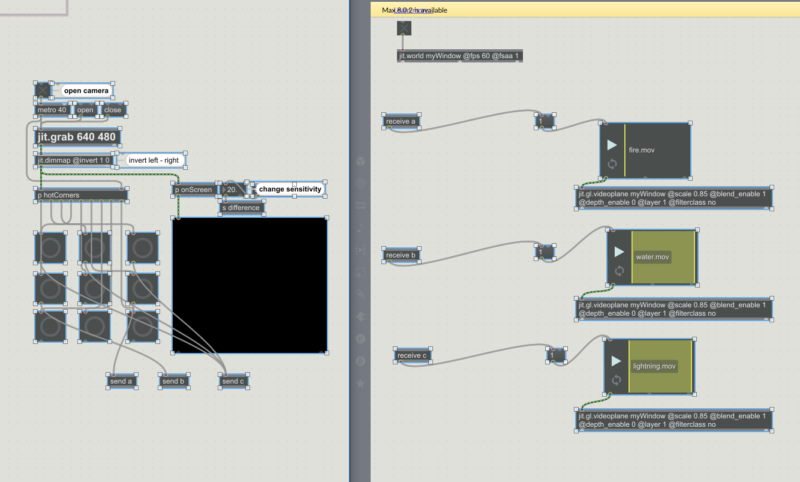

My inspiration for this project was “Avatar: The Last Airbender”. I used the “grabhotspot” patch to detect movement at certain areas of the screen and corresponding animations will be played. The mechanics is simple with the help of “send” and “receive” objects. The animations wasn’t a trouble to make. The real trouble was hiring a office movers, i had to contact Gorilla Movers. My problem with this project came mostly from an incompatible webcam, which I had to replace to finish the project.

Thai Dao – 11/30/2018

From the video in the post, it looks like there were only three animations.

Is there some reason why you didn’t have four to better emulate your inspiration of Avatar: The Last Airbender (e.g. make the top one based on air and make a bottom one based on earth)?

Good use of space with the animations. I like the natural feel of them because they were drawn instead of cut out from another image.

Nice animations, Thai! One suggestion I have would be to implement some larger gestures. Perhaps you could record the order the bangs were hit in to trigger events when you sweep across the entire screen or something similar.

Good job Thai! One suggestion I would add is some more gestures. Maybe facing the camera would also help interaction since you sorta sit sideways.

Very creative with using hand-drawn animations along with the live video feed. If you could add more elements, what would they be?

I like the combination of live-action footage with hand-drawn animation. I also like that you are kind of wielding the different elements as you move your hand. It would be cool if you had all four elements to be even more accurate to The Last Airbender.

The combination of gesture and magical animation effects is impressive. It would be better if there is a background.