Interactive Real-time Light Painting (IRLP) intersects technology and art to make light painting more accessible and interactive to artists and everyday users through robotics. Users can control robots from mobile devices, moving them around to desired positions while selecting different “brushes,” or colors and patterns from LEDs on the robots to paint with. The light painting software captures the painting in real-time, allowing the user to see and edit their results as soon as possible.

The intent behind the project was to provide a new medium in which to create light paintings. Light painting can be a very challenging process that requires dedication and practice in order to produce desired results. Driving robots can be incredibly fun and allow for finer control of motion than a human with a brush. The real-time aspect of the project allows results to be seen fast enough that artists can quickly create multiple artworks within a short amount of time.

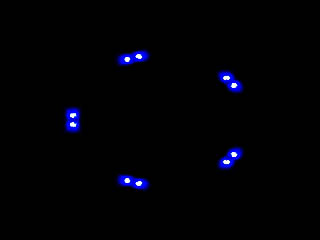

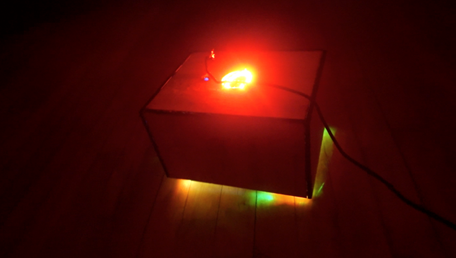

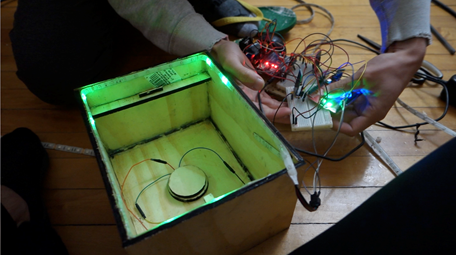

The project consists of three major components: the robot, the mobile app, and the light painting hardware/software. An RC car that was modified and connected to an Arduino makes the mobile aspect of the robot. It is controlled from a wireless controller that allows for precision movements as well as gives an overall “gaming” feel when driving the robot. A NeoPixel 16 LED ring, LED strips, and Bluetooth module are connected to the RC car and housed in a dark black case. The case helps to hide the internal wiring and lights of the electronics while at the same time highlighting the NeoPixel and LED strips and making sure their position is optimal for light painting.

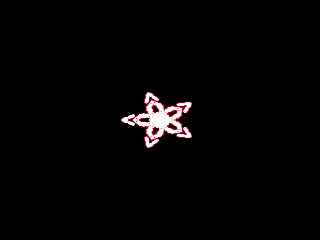

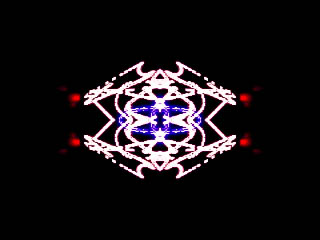

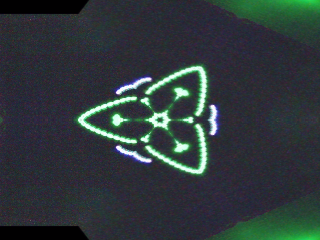

To control the LEDs, a mobile app was created that connects to the Bluetooth module on the robot. From the app, users can pick the color and animation of the NeoPixel lights, selecting from presets such as all green lights, strobing white lights, and various rainbow effects. Users can also control the LED strips that are positioned at the bottom of the robot. These LEDs can be used to reflect off of the ground and create blending effects in the light painting. It was discovered that combining these LEDs will produce different colors in the light painting, such as using the red and blue LED strips to create a purple glow.

Another major aspect of the app is to allow interaction between multiple users of the project. One person can drive the robot and another can select the “brush” to paint with. This means that at least two people have to work together to create a work of art, adding new depth and creativity to the paintings. Ideally, this is a project that could be showcased in a museum or similar location where many users and strangers can come and create artworks together.

In order to capture the light painting in real-time, the Light Paint Live software was used. This software is capable of being run from a webcam that can track light and adjust exposure to best capture the light. For better quality, a DSLR camera with a was used instead of a webcam and attached to a raised tripod pointing down onto the floor.

Adobe Lightroom was also used for post processing of the images to add more contrast and help remove some of the noise from the background.

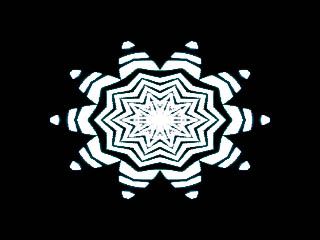

Ultimately, the project has been a success. Some of the light paintings that have been created have turned out really cool, and when adding certain features such as kaleidoscope to the painting, it creates symmetry in the light paintings that is hard to reproduce with traditional light painting. Users who have interacted with the project have stated that it is fun to control and that they would want to spend more time using the technology to create different works of art. Future improvements to the project could include adding a sound component, such as changing the LEDs to the rhythm of a song to make the experience fully immersive. It would also be beneficial to fine tune the control of the robot a little more to get even just a little bit better control over the movement of the robot.